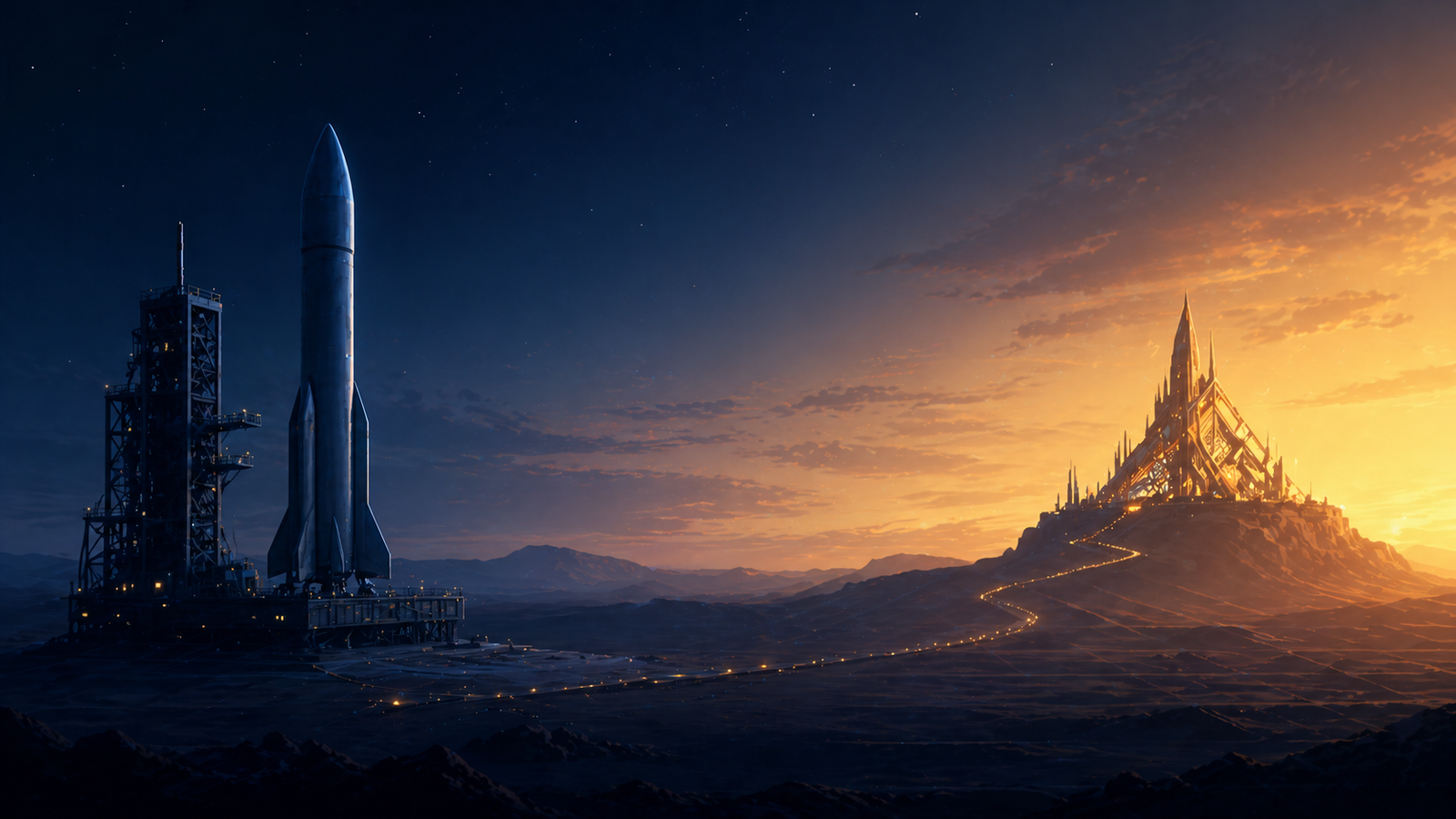

An important launch pad. The destination is somewhere else.

On January 30, 2026, Anthropic quietly published eleven open-source Claude plug-ins to GitHub. No launch event. Just a set of markdown and JSON files dropped into a public repository.

The stock market noticed.

Within days, roughly $285 billion in market value evaporated from enterprise software companies. Thomson Reuters had its biggest single-day drop in company history, down sixteen percent. LegalZoom fell twenty percent. RELX, the parent of LexisNexis, lost fourteen percent. Salesforce, ServiceNow, and Adobe each dropped around seven percent.

Some analysts dubbed it a SaaSpocalypse.

The plug-ins themselves are small. Hundreds of kilobytes total. What rattled the market wasn’t the size, it was the implication. For the first time, investors saw plug-ins not as a feature that helps SaaS work better, but as something that could potentially replace task-level SaaS work entirely.

Gartner’s analysts hedged the take, calling them “potential disrupters for task-level knowledge work but not a replacement for SaaS applications managing critical business operations.” Even hedged, the message was clear. The category had crossed a line.

I’ve been designing AI-native workflows for a few years. Plug-ins for software teams. Plug-ins for my own business operations. Custom plug-ins for clients across different industries.

As the discourse around plug-ins has exploded since January 30, I keep noticing the same framing problem. Most of the conversation treats opinionated open-source Claude plug-ins like Superpowers, or the Anthropic-shipped plug-ins themselves, as if they’re the destination for AI-native workflows.

They’re not. They’re a launch pad, and a really good one. But treating a launch pad as the destination is exactly why most teams I’ve watched hit a ceiling and quietly go back to babysitting their agents one task at a time.

This post covers three things in sequence:

- Where AI-native work is actually heading, and why that changes the plug-in conversation entirely.

- Why off-the-shelf opinionated plug-ins, however good, structurally can’t get you to that destination.

- What the destination actually looks like in practice, plus a concrete four-step path you can take from wherever you are today.

If you’ve been collecting opinionated plug-ins assuming they’ll add up to a real AI-native workflow, this post is going to challenge that. If you’ve watched your team plateau after the initial Superpowers honeymoon, this post is going to tell you why. And if you’re in a non-engineering function watching engineering teams adopt these tools and wondering whether any of this applies to you, this post is for you too. Especially you.

What we mean by Claude plug-ins

For readers who haven’t gone deep yet, here’s the working definition.

A Claude plug-in is a bundled set of skills, commands, agents, and external integrations that change how Claude behaves. You install one, and Claude starts handling certain kinds of work in a specific, opinionated way, without anyone typing “do it like this” every time.

A skill is the most common ingredient. It’s a self-contained set of instructions for handling a particular kind of task. When you ask Claude to do something that matches a skill’s description, that skill loads and the agent follows the encoded approach. (For a deeper dive on the primitives that make up plug-ins, see my Claude Code primitives guide.)

The category-level point is what matters: Claude plug-ins encode opinionated, repeatable approaches to work, so the human doesn’t have to direct the agent through every step.

Where AI-native work is actually heading

The trajectory of AI-native work isn’t toward better human-in-the-loop. It’s toward less human-in-the-loop. Eventually, no human-in-the-loop except at the start and the end.

The destination is a workflow where the human sets the goal, names the constraints, defines what good looks like, and hands off. The agent (or team of agents) goes off, executes, and comes back when there’s something for the human to review.

That’s what the market reaction at the top of this post was actually pricing in. Both Anthropic and Gartner used the phrase “task-level knowledge work” deliberately. Not “tools that help humans do tasks faster.” Tools that own tasks.

Now ask yourself the question that matters. If you were going to hand off a complex task to an AI agent right now and tell it, “here’s the goal, here’s what good looks like, come back when it’s done,” could you?

For most teams, the answer is no.

There are teams already doing exactly that, though, and the existence of those teams matters for this argument. As of early 2026, Stripe’s engineering team is running a system they call “Minions”, homegrown autonomous coding agents that write code end to end and merge more than a thousand pull requests per week. Humans review the code. The agents do everything else.

Stripe is not a typical team. They’re a deeply mature engineering organization, with mature processes and tooling and documentation already in place, all of which made the transition to autonomous agents more natural than it would be for most.

That caveat matters. What also matters is that it has been done, at scale, in production, by a team that put in the work to encode their environment, their conventions, their build systems, and their review gates in a way their agents could trust. The autonomous-with-review pattern isn’t theory. It’s a public reference case. The question for the rest of us isn’t whether the destination exists. It’s whether we’ll be ready to operate there when more of the industry follows.

You can’t hand off a complex task to an autonomous agent without telling it:

- Your workflow and process

- Where to find the knowledge it needs

- Where your conventions live

- What your rules are

- Which external systems to interact with and how

- What your review gates and quality bars are

- Where the human approval points are

You can do that two ways.

You can type it all in every single task. That’s babysitting. You may already be doing it.

Or you can encode it once, in a Claude plug-in or some other mechanism your team builds for the purpose. Plug-ins are the most accessible mechanism for most teams using Claude today. Stripe’s Minions is a different mechanism, a custom-built system end to end. The category-level point is the same: repeatable, environment-aware encoding of your team’s reality. The mechanism varies. The need does not.

There is no third option that scales. There certainly isn’t a “generic off-the-shelf plug-in does it for you” option, because by definition the generic plug-in doesn’t know any of your specifics.

This is the urgent part. The autonomous-with-review future isn’t a five-year horizon. It’s a conversation already happening, in some teams already operating. The teams that get there first will be the teams that have already done the work to encode their environment. The teams still relying on generic Claude plug-ins won’t be able to participate, because their agents won’t know enough to be trusted with the handoff.

Off-the-shelf opinionated plug-ins are a useful step on the way. They are not, and cannot be, the destination. Now let’s look at why.

A note before we go further

We’re going to use software engineering examples throughout this post. It’s where the patterns are clearest and the failure modes are most visible.

If you’re not in engineering, keep reading.

The math doesn’t change with the domain, because the failure mode doesn’t change with the domain. An agent that doesn’t know YOUR world becomes a babysitting job. That’s true in software engineering. It’s true in legal, finance, HR, support, marketing operations, every function.

Use the engineering examples. Substitute your reality. The argument lands the same way.

The maturity progression most teams move through

I’ve watched a clear progression over the last few years.

State 1: Ad-hoc prompting. Someone has an AI tool. They start each task by typing context into the chat. The agent does interesting work, but every task starts cold.

State 2: Off-the-shelf opinionated plug-in. The team installs Superpowers, Anthropic’s Legal plug-in, or one of the dozens of community plug-ins now available. They get a methodology. Skills get triggered automatically. The work becomes more consistent than ad-hoc.

State 3: Environment-aware customized plug-in. The plug-in knows the team’s tools, conventions, knowledge sources, and review gates. The agent isn’t just methodologically opinionated. It’s contextually wired into the team’s actual external world.

Most teams know the leap from State 1 to State 2. They install Superpowers, see immediate improvement, and assume they’ve arrived.

They haven’t. State 3 is where the real productivity lives, and it’s the only place from which the autonomous-with-review future is reachable. State 2 cannot get you there. By design.

The ceiling: what off-the-shelf, by design, can’t do

Let’s get concrete with two specific plug-ins, one from each domain we mentioned.

Superpowers: a methodology, by deliberate design

Superpowers is a popular open-source plug-in for software development work. It ships fourteen skills, three commands, one agent, and one session-start hook. Skills include writing-plans, requesting-code-review, test-driven-development, and finishing-a-development-branch.

It’s a methodology in a box, and the methodology is thoughtful.

What it doesn’t ship: any integration with the systems your team actually uses. Search the entire repo for “jira,” “linear,” “notion,” “confluence,” or “slack” and you’ll find zero hits. No MCP servers bundled. The only external surface is git and the gh CLI.

This isn’t an oversight. It’s the explicit design philosophy. The Superpowers contributor guide states it plainly: “Superpowers is a zero-dependency plugin by design. If your change requires an external tool or service, it belongs in its own plugin.” It also explicitly rejects “skills, hooks, or configuration that only benefit a specific project, team, domain, or workflow.”

Their job is to ship a generic methodology. It’s not their job to know your stack.

In practice, that means the writing-plans skill produces a markdown plan saved to a default local path. The requesting-code-review skill takes a base SHA, head SHA, and free-text description as inputs, then dispatches the bundled code-reviewer against the local diff.

It doesn’t pull acceptance criteria from your ticketing system. It doesn’t post a review summary to your team’s chat. It doesn’t gate on a Confluence runbook. It doesn’t honor CODEOWNERS or your required-reviewer rules.

Every one of those is something you’d want for a real production workflow. Every one is something you’d have to bolt on yourself.

The community is already doing exactly that. There are well over eighty public forks of Superpowers, each adding domain or team specifics that the core project deliberately won’t accept upstream. That’s market signal.

Operators are flagging something else worth noting. Multiple users running Superpowers have reported higher-than-expected token consumption on tasks they expected to be cheap. Whatever the specific cause inside the plug-in’s design, the pattern shows up consistently enough that experienced operators are talking about it.

Anthropic’s Legal plug-in: same shape, different domain

Switch domains. The Anthropic Legal plug-in includes skills like triage-nda, review-contract, vendor-check, and legal-risk-assessment.

The triage-nda skill is good. It rapidly classifies an incoming NDA as GREEN (standard approval), YELLOW (counsel review needed), or RED (full legal review).

Read what the skill itself says about playbook configuration: “Look for NDA screening criteria in local settings (e.g., legal.local.md). If no NDA playbook is configured: Proceed with reasonable market-standard defaults.”

The defaults are reasonable. They’re also generic.

Your firm has its own carveouts, jurisdictional preferences, client-confidentiality precedents, routing rules, and delegation of authority. The skill itself acknowledges this. It tells you to put YOUR specifics in legal.local.md.

What the plug-in does not do: integrate with your contract lifecycle management system, route signed agreements through your DocuSign template library, pull from your Box or Egnyte folder structure, post triage outcomes to your firm’s chat channel, or follow your firm’s specific routing rules to a named designated reviewer.

Same structural shape as Superpowers, in a completely different domain. A solid methodology. Sensible defaults. A legal.local.md (or its equivalent) where your specifics are supposed to go. Generic by design. Your wiring required.

This is a category-level pattern. It’s not a critique of either plug-in. It’s a recognition of what off-the-shelf, by definition, has to be.

Same task, very different shapes

Look at one specific slice across two plug-ins: producing an implementation plan for a piece of work. Both Superpowers and a custom Atlassian-wired Claude plug-in have an explicit concept here. The difference is in what each one does before any planning starts.

Superpowers takes whatever spec you type and writes a plan. Useful, but the agent starts every task with no automatic knowledge of where your work lives, what your team’s conventions are, or what tech decisions have been made. That’s not a Superpowers flaw. It’s the design philosophy. Generic methodology, no environment.

The custom plug-in starts every task by automatically pulling six things, before any planning happens: a project manifest pointing to the team’s external systems, the team’s specific rules and conventions, the actual Jira issue via the Atlassian MCP, the tech stack detected from package files, current library documentation via Context7, and the team’s Confluence tech decisions pages. Without the developer typing any of it.

The result is consistency. The same workflow runs ticket after ticket. The same rules apply, the same actions execute, regardless of which engineer is at the keyboard. You don’t have to tell the agent about Jira on Monday and re-tell it on Tuesday. You don’t get one shape from one engineer and a different shape from another. That level of repeatability, ticket to ticket and human to human, isn’t reachable with a generic Claude plug-in like Superpowers.

Then the downstream consequence. Superpowers’ plan ends up as a standalone markdown file that no other skill references. The custom plug-in’s plan is linked to the Jira issue and gets compared, automatically, against the actual implementation when the work wraps up. A deviation review catches drift before the PR goes for human review.

This is what “environment-aware” means concretely. The methodology layer is similar between the two. The wiring isn’t even close.

Three structural reasons customization is unavoidable

The “off-the-shelf can’t bake in your environment” argument is the surface argument. Underneath it sit three structural realities.

Repeatability is dramatically underestimated

Repeatability is the most underestimated property of an AI-native workflow.

Sit with that for a moment.

When a software engineer picks up a ticket today and works with their AI agent, what do they have to teach the agent every single time?

- Where Jira lives, and that we even use Jira

- Which tool to use to fetch the ticket

- How we want the ticket analyzed

- How we want external library context gathered

- Where our team’s conventions and rules live

- How we do security review

- When to create a feature branch and when not to

- When to offer a pull request and when to wait

- Where the human approval gates are

- How we transition the ticket through workflow states

Every ticket. Every time.

This is the babysitting tax, and it’s invisible until you list it out.

A finance lead working a month-end close has the same problem. So does an HR ops manager onboarding a new hire. So does a legal counsel triaging incoming NDAs. The list of things they shouldn’t have to re-teach changes by domain. The cost of re-teaching is the same.

A customized plug-in encodes all of that. The agent knows. You don’t have to direct.

Token cost compounds, quietly and quickly

The babysitting tax has a real economic cost, hidden in your API bill.

To be clear about what we’re talking about: the agent absolutely should read your codebase, your tickets, and your task-relevant artifacts. That’s the agent doing useful work. The cost we’re talking about is something else, and it’s specifically what a well-designed customized plug-in (or other encoding mechanism) eliminates.

Three failure modes that environment-aware encoding eliminates:

Rediscovering orientation. Without encoded environment knowledge, the agent has to figure out things you already know. It tries to infer the team’s tooling from whatever signals it can find, looking for Jira config when the team uses Linear, searching for a Notion-style directory when docs are in Confluence. That’s the agent rediscovering, every task, things you haven’t encoded.

Asking. When the agent doesn’t know your conventions, it asks. Each round-trip pulls the entire conversation context back through the model. Multi-turn clarification is one of the most expensive patterns there is.

Guessing and recovering. When the agent doesn’t know your rules, it guesses, fails, and recovers. Each failure cycle costs roughly two to three times the tokens of doing it right the first time, because the model has to read the failure output, reason about it, and try again.

These failure modes aren’t inherent to off-the-shelf plug-ins specifically. They’re the consequence of any plug-in not knowing the operator’s environment, including a custom plug-in that’s been poorly designed. The advantage of a well-designed customized plug-in is that the agent doesn’t rediscover, doesn’t ask, and doesn’t guess about the things the plug-in already encodes.

One caveat. This advantage assumes the plug-in is well-designed, using progressive disclosure (skill descriptions in context, skill bodies loaded only when triggered) and lazy-loaded components. A poorly architected plug-in that crams everything upfront could be worse than a lean off-the-shelf one. The claim is “well-designed customization beats generic.” Build it right.

Your workflow will evolve. Whose plug-in evolves with it?

This isn’t talked about enough.

Whatever Claude plug-in you’re using today, your workflow is going to evolve. Guaranteed, on at least three timelines.

Your team’s process will change. Patterns that work today will reveal gaps. New requirements will surface.

The model layer will change. The skill that worked beautifully against today’s frontier model may behave differently against the next major release. This has happened to every team running AI in production over the last two years.

Your tools will change. Your team migrates from Jira to GitHub Issues. Your CI moves from CircleCI to GitHub Actions. Your knowledge base moves from Confluence to Notion. Pick a three-year horizon and tell me with a straight face that none of that will happen.

Now ask: who controls the evolution of an off-the-shelf plug-in?

The maintainer. On their timeline. With their priorities.

The Jira-to-GitHub-Issues case is the cleanest illustration. An off-the-shelf plug-in with hard-coded Jira integration cannot bridge that swap without a fork-and-maintain commitment, at which point you’re doing customization anyway, just reactively.

Your own plug-in can. With Claude doing the actual code changes and you driving the design, the migration is a conversation, not a project.

And the case keeps building

A few more reasons that are each a follow-up post on their own:

- Onboarding compresses dramatically when team conventions are encoded. New hires get the agent that already knows how things work.

- Tribal knowledge stops walking out the door when senior people leave.

- Every refinement compounds across every future task, in the way all good systematization does.

The structural case for customization isn’t one big argument. It’s a stack of mutually reinforcing ones.

What State 3 looks like, from a plug-in I’ve built

Abstract arguments fall flat. Let me make this concrete.

I’ve built a software development workflow plug-in that I use on my own engineering projects and have adapted into variants for different team setups. Three working variants today.

- One for teams on Atlassian. Jira for tickets and sprint management. Confluence for documentation, tech decisions, conventions, and PRDs.

- One for teams on GitLab and Google Drive. Same workflow shape, different external systems.

- One that doesn’t rely on any external SaaS at all. Work items, design docs, PRDs, every project artifact, all live as structured files inside the codebase. The plug-in teaches the agent how and where to store work items, when and how to access them, and how to move them through to-do, in-progress, and completed states.

All three share the same opinionated workflow cycle. Same sub-agent patterns for code-health and security review. Same plan-versus-implementation deviation review. Same human-in-the-loop gates. The only thing that changes between variants is the external wiring.

Same workflow DNA. Different operational reality.

Here’s what a typical engineering ticket looks like flowing through any of these variants.

A developer picks up a ticket. They invoke the planning workflow. The agent already knows the team’s tooling, whether that’s a Jira project, a GitLab issue, or a structured markdown file in a repo directory. It knows where conventions live. It knows where tech decisions live.

It pulls the ticket. It loads the conventions. It surfaces relevant library docs based on the detected stack. It presents the loaded context for the developer to confirm.

The developer confirms. The agent plans, implements, runs the tests, runs a code-health review, runs a security review, compares the implementation against the original plan, pushes the branch, opens the pull request, transitions the ticket to In Review, and tells the developer to come review.

The developer set the goal. The agent did the boring middle. The developer reviewed the result.

This is reachable today. It’s not the autonomous-with-review future yet, since there are still human checkpoints throughout. But notice how much has been collapsed compared to the State 2 reality.

State 3 isn’t a software-only thing. It’s a category-level thing. And it’s the foundation everything else gets built on, including the autonomous-with-review future we discussed earlier.

How to actually get there

If you’re wondering how to move from where you are today, here’s the path I’d recommend, in order.

- If you’re starting from ad hoc, install an off-the-shelf opinionated plug-in. Don’t skip this step. Superpowers, the Anthropic Legal plug-in, whichever is closest to your domain. The methodology bump alone is worth it, and it gives you something concrete to react against.

- Use it. Find where it bends to your world and where it breaks. Notice the moments when you’re typing context every task. Notice when the agent guesses wrong because it doesn’t know your conventions. Notice when you wish it would just call your CLM, your CI, your knowledge base, your chat. Those are the gaps.

- Use that learning to map your real requirements. Your workflows. Your environment. Your tools. Your knowledge sources. Your policies. Your gates. The off-the-shelf plug-in was your teacher. Now the lesson is what YOUR plug-in needs to embody.

- Build your own. It might be a fork or clone of an existing plug-in that’s “close enough” plus the integrations and rules YOUR team needs. It might be designed from scratch if the off-the-shelf option requires too much modification. Either way, Claude (Code or Cowork) does most of the actual building. You drive the design.

The objection I hear at this point is always the same: “Isn’t building our own plug-in a huge engineering lift?”

No. It’s a design lift. Claude does the implementation. Your job is to know your environment and your workflows well enough to specify them. That’s a job you’re already doing every time you re-teach the agent. You’re just consolidating the teaching.

And as your workflow evolves, your plug-in evolves with it. The maintenance is a conversation, not a project.

The math is the same regardless of where you sit

The market reaction in late January 2026 was a signal. Investors saw plug-ins clearly enough to reprice the SaaS sector by hundreds of billions of dollars in days. They saw what’s coming.

What they were pricing in wasn’t off-the-shelf Claude plug-ins. It was the autonomous-with-review future those plug-ins point toward. Stripe’s engineering team is already operating there. More teams will follow.

If you take only a few things from this post, take these.

The real destination is autonomous-with-review, not faster human-in-the-loop. Off-the-shelf opinionated plug-ins are a launch pad, not the destination, and that’s not a flaw, it’s their scope. Customization is unavoidable for three structural reasons: the repeatability tax, the compounding token cost, and the inevitability of workflow evolution. The path forward is to install something off-the-shelf, learn from it, map your real requirements, then build your own with Claude doing the implementation work. The math is the same whether you’re in engineering, legal, finance, HR, support, or running ops for a small business out of a single laptop.

Off-the-shelf opinionated Claude plug-ins are good. They’re a real step forward from ad-hoc, and the best of them encode methodologies you’d struggle to come up with on your own. They are also, by design, generic. That makes them a launch pad. It does not make them a destination.

Your destination has to be yours. And the time to start moving toward it is now, because the trajectory we just talked about isn’t slowing down to wait for you.